Exadata storage cell rolling restart caused datafile and redo log file header block corruptions

Posted by Kamran Agayev A. on 8th February 2020

24 hours passed – still at work. Struggling to start up the database which was corrupted during cell storage rolling restart procedure. And I’ve never seen some Oracle error messages that I saw today. So here what is happened:

################

Exadata storage cell failure during so-called “rolling cell storage restart”. Data file headers are corrupted for some files just because of rolling restart of storage cells and it can’t read the mirror file in the normal redundancy diskgroup as well!!! Both are corrupted! SR created – but there’s no reply!

################

Read of datafile '+###1/###i/datafile/###_6077.1015929889' (fno 1367) header failed with ORA-01208 Rereading datafile 1367 header from mirror side 'DA1_CD_05_CELADM02' failed with ORA-01208 Errors in file /u01/app/oracle/diag/rdbms/###i/###I_2/trace/###I_2_ckpt_360497.trc: ORA-63999: data file suffered media failure ORA-01122: database file 1367 failed verification check ORA-01110: data file 1367: '+DATAC1/###i/datafile/###.6077.1015929889' ORA-01208: data file is an old version - not accessing current version

################

Instance terminated in both RAC nodes!

################

License high water mark = 107 Instance terminated by CKPT, pid = 360497 USER (ospid: 173989): terminating the instance Instance terminated by USER, pid = 173989

################

Instance can’t be opened and media recovery is required!

################

Abort recovery for domain 0

Errors in file /u01/app/oracle/diag/rdbms/###i/###I_2/trace/###I_2_ora_175176.trc:

ORA-01113: file 10 needs media recovery

ORA-01110: data file 10: '+DATAC1/###i/datafile/###_4932.1028366333'

ORA-1113 signalled during: ALTER DATABASE OPEN /* db agent *//* {0:3:84} */...

NOTE: Deferred communication with ASM instance

NOTE: deferred map free for map id 1127

Fri Feb 07 16:27:06 2020

License high water mark = 1

USER (ospid: 175310): terminating the instance

Instance terminated by USER, pid = 175310

################

Datafiles are corrupted!

################

Abort recovery for domain 0 Errors in file /u01/app/oracle/diag/rdbms/###i/###I_2/trace/###I_2_ora_75964.trc: ORA-01122: database file 410 failed verification check ORA-01110: data file 410: '+DATAC1/###i/datafile/###_5420.1007284567' ORA-01207: file is more recent than control file - old control file ORA-1122 signalled during: alter database open...

################

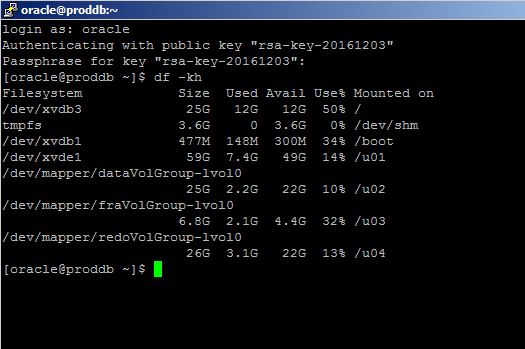

OMG! I didn’t do anything? Tried to restore some datafiles from backup and recover them. V$RECOVERY_FILE is empty now. Tried to start the database:

################

Abort recovery for domain 0 Aborting crash recovery due to error 742 Errors in file /u01/app/oracle/diag/rdbms/###i/###I_2/trace/###I_2_ora_75964.trc: ORA-00742: Log read detects lost write in thread %d sequence %d block %d ORA-00312: online log 4 thread 1: '+DATAC1/###i/onlinelog/group_4.961.997203859' Abort recovery for domain 0 Errors in file /u01/app/oracle/diag/rdbms/###i/###I_2/trace/###I_2_ora_75964.trc: ORA-00742: Log read detects lost write in thread %d sequence %d block %d ORA-00312: online log 4 thread 1: '+DATAC1/###i/onlinelog/group_4.961.997203859' ORA-742 signalled during: alter database open...

################

This is the first time ever I see “Log read detects lost write” message! It means LGWR thinks that the changes are written to the redo log files, but they are not! Meanwhile, SR 1 was created 2 hours ago – no response from Oracle! After an investigation we detected that the CURRENT logfile is corrupted which reside in the normal redundancy disk group! Oracle support guy replied to SR to run “recover database until cancel” command  Then the second guy came in and said don’t try this

Then the second guy came in and said don’t try this

################

################

During datafile restore, the first block (which is header) seemed to be corrupted in both ASM allocaiton units in different disks (cells) !!!

################

computed block checksum: 0x0 Reading datafile '+DATAC1/###i/datafile/###899.1007892167' for corruption at rdba: 0x67400001 (file 413, block 1) Read datafile mirror 'DAC1_CD_07_CELADM02' (file 413, block 1) found same corrupt data (no logical check) Read datafile mirror 'DAC1_CD_02_CELADM01' (file 413, block 1) found same corrupt data (no logical check) Hex dump of (file 414, block 1) in trace file /u01/app/oracle/diag/rdbms/###i/###I_2/trace/###I_2_ora_122826.trc Corrupt block relative dba: 0x67800001 (file 414, block 1) Bad header found during kcvxfh v8

################

Started restoring from backup (20TB) to the different machine. Seems to be the only way to restore the service. Andddd ….. Recovery interrupted!

################

Errors with log /backup1/###I/ARCH/thread_2_seq_1169.14245.1031731123 Errors in file /u01/app/oracle/diag/rdbms/###i/###I_1/trace/###I_1_pr00_81255.trc: ORA-00310: archived log contains sequence 1169; sequence 1160 required ORA-00334: archived log: '/backup1/###I/ARCH/thread_2_seq_1169.14245.1031731123' ORA-310 signalled during: ALTER DATABASE RECOVER LOGFILE '/backup1/###I/ARCH/thread_2_seq_1169.14245.1031731123' ... ALTER DATABASE RECOVER CANCEL Signalling error 1152 for datafile 2!

################

RMAN is looking for the archived log file that was backed up and deleted in the beginning of the backup and wasn’t restored.

Aaaaandddddd …….. “OPEN RESETLOGS would get error” message! Are you kidding me?

################

Errors in file /u01/app/oracle/diag/rdbms/###i/###I_1/trace/###I_1_pr00_81255.trc: ORA-01547: warning: RECOVER succeeded but OPEN RESETLOGS would get error below ORA-01152: file 2 was not restored from a sufficiently old backup ORA-01110: data file 2: '+DATAC1/###i/datafile/sysaux.2948.1031790467' ORA-1547 signalled during: ALTER DATABASE RECOVER CANCEL ...

################

Cataloged some missing backup files, restored required archived log files and the recovery proceeded. But we got another error!

################

File #142 added to control file as 'UNNAMED00142'. Originally created as: '+DATAC1/###i/datafile/###_2020_idx.797.1031737697' Errors with log /backup2/###I/ARCH/thread_2_seq_1176.9418.1031737965 Recovery interrupted! Recovery stopped due to failure in applying recovery marker (opcode 17.30). Datafiles are recovered to a consistent state at change 8534770504316 but controlfile could be ahead of datafiles. Media Recovery failed with error 1244 Errors in file /u01/app/oracle/diag/rdbms/###i/###I_1/trace/###I_1_pr00_323548.trc: ORA-00283: recovery session canceled due to errors ORA-01244: unnamed datafile(s) added to control file by media recovery ORA-01110: data file 142: '+DATAC1/###i/datafile/###797.1031737697'

################

Some datafiles were added after the last controlfile backup (and controlfile auto backup was not enabled) and those datafiles are created with UNNAMED name. Renamed datafiles and started the recovery again

################

At the end, opened database successfully. Changed 4 different support engineers, some of them seemed junior for me. They just copied some steps from metalink notes and sent to me. The reason is still under investigation

Posted in Uncategorized | 4 Comments »